Neural networks are a cornerstone of modern artificial intelligence, mimicking the way human brains process information. At their core, these systems consist of interconnected nodes, or neurons, that work together to analyze data and identify patterns. Each neuron receives input, processes it, and passes the output to subsequent neurons.

This architecture allows neural networks to learn from vast amounts of data, making them incredibly powerful for tasks ranging from image recognition to natural language processing. The beauty of neural networks lies in their ability to adapt and improve over time. As they are exposed to more data, they refine their internal parameters, enhancing their accuracy and efficiency.

This learning process is akin to how humans gain expertise through experience. By leveraging layers of neurons, neural networks can capture complex relationships within data, enabling them to tackle intricate problems that traditional algorithms struggle with. For the latest tech gadgets, Visit iAvva Store today.

Key Takeaways

- Neural networks mimic the human brain to process complex data and learn patterns.

- Training involves adjusting weights through data exposure to improve accuracy.

- Optimization techniques enhance performance and reduce errors in neural network models.

- Neural networks are widely applied in areas like image recognition, natural language processing, and autonomous systems.

- Ethical and future considerations include bias mitigation, transparency, and integration with emerging technologies.

Training Neural Networks

Training a neural network involves feeding it a large dataset and allowing it to learn from that data through a process called backpropagation. During this phase, the network makes predictions based on its current understanding and then compares those predictions to the actual outcomes. The difference between the predicted and actual results, known as the loss, is used to adjust the weights of the connections between neurons.

This iterative process continues until the network achieves an acceptable level of accuracy. The quality and quantity of the training data are crucial for successful neural network training. A diverse dataset helps the network generalize better, reducing the risk of overfitting—where the model performs well on training data but poorly on unseen data.

Techniques such as data augmentation and regularization can be employed to enhance training effectiveness. Ultimately, a well-trained neural network can make reliable predictions and provide valuable insights across various applications.

Optimizing Neural Network Performance

Optimizing the performance of neural networks is essential for achieving high accuracy and efficiency. Several strategies can be employed to enhance performance, including hyperparameter tuning, which involves adjusting parameters such as learning rate, batch size, and the number of layers. These hyperparameters significantly influence how well a network learns from data and can lead to substantial improvements in performance when optimized correctly.

Another critical aspect of optimization is model architecture. Different architectures can yield varying results depending on the specific task at hand. For instance, convolutional neural networks (CNNs) are particularly effective for image-related tasks, while recurrent neural networks (RNNs) excel in processing sequential data like time series or text.

By experimenting with different architectures and configurations, we can identify the most suitable model for a given problem, ultimately leading to better outcomes.

Applying Neural Networks in Real-world Scenarios

Neural networks have found applications across numerous industries, revolutionizing how businesses operate and make decisions. In healthcare, for example, they are used for diagnosing diseases from medical images with remarkable accuracy. By analyzing thousands of images, neural networks can identify subtle patterns that may elude human experts, leading to earlier detection and improved patient outcomes.

In finance, neural networks are employed for fraud detection and risk assessment. By analyzing transaction patterns in real-time, these systems can flag suspicious activities and help institutions mitigate potential losses. Similarly, in marketing, neural networks enable personalized recommendations by analyzing consumer behavior and preferences.

This level of insight allows companies to tailor their offerings effectively, enhancing customer satisfaction and loyalty.

Exploring Different Types of Neural Networks

| Metric | Description | Typical Range/Value | Importance |

|---|---|---|---|

| Number of Layers | Count of hidden and output layers in the network | 1 – 100+ | Determines model depth and capacity |

| Number of Neurons per Layer | Units or nodes in each layer | 10 – 10,000+ | Affects model complexity and learning ability |

| Learning Rate | Step size for weight updates during training | 0.0001 – 0.1 | Controls convergence speed and stability |

| Epochs | Number of complete passes through the training dataset | 10 – 1000+ | Impacts training completeness and overfitting risk |

| Batch Size | Number of samples processed before updating weights | 16 – 1024 | Affects training speed and memory usage |

| Activation Functions | Functions applied to neurons’ output (e.g., ReLU, Sigmoid) | ReLU, Sigmoid, Tanh, Leaky ReLU | Introduces non-linearity for complex learning |

| Accuracy | Percentage of correct predictions on test data | Varies by task, often 70% – 99% | Measures model performance |

| Loss | Value of the loss function during training | Depends on loss type, typically decreases over epochs | Indicates how well the model fits the data |

| Parameters | Total number of trainable weights and biases | Thousands to billions | Determines model capacity and resource needs |

| Dropout Rate | Fraction of neurons randomly ignored during training | 0.1 – 0.5 | Helps prevent overfitting |

There are various types of neural networks designed for specific tasks and applications. Feedforward neural networks are the simplest form, where information moves in one direction—from input to output—without any cycles or loops. These networks are suitable for straightforward classification tasks but may struggle with more complex relationships.

Convolutional neural networks (CNNs) are specifically designed for image processing tasks. They utilize convolutional layers to automatically detect features such as edges and textures, making them highly effective for tasks like image classification and object detection. On the other hand, recurrent neural networks (RNNs) are tailored for sequential data processing.

They maintain a memory of previous inputs, allowing them to analyze time-dependent data effectively, such as in natural language processing or speech recognition.

Overcoming Challenges in Neural Network Development

Despite their potential, developing effective neural networks comes with its own set of challenges. One significant hurdle is the need for large amounts of labeled data for training purposes. Acquiring and annotating this data can be time-consuming and costly.

Moreover, ensuring data quality is paramount; poor-quality data can lead to biased or inaccurate models. Another challenge lies in the interpretability of neural networks. As these models become increasingly complex, understanding how they arrive at specific decisions becomes more difficult.

This lack of transparency can hinder trust among stakeholders and complicate regulatory compliance in sensitive industries like healthcare and finance.

Leveraging Neural Networks for Pattern Recognition

Pattern recognition is one of the most powerful applications of neural networks. By analyzing vast datasets, these systems can identify trends and anomalies that may not be immediately apparent to human analysts. For instance, in cybersecurity, neural networks can detect unusual patterns in network traffic that may indicate a potential breach or attack.

In retail, businesses leverage neural networks to analyze customer purchasing patterns and preferences. By recognizing these patterns, companies can optimize inventory management and tailor marketing strategies to meet customer demands effectively.

Harnessing the Potential of Deep Learning with Neural Networks

Deep learning is a subset of machine learning that utilizes multi-layered neural networks to analyze complex data representations. This approach has gained significant traction due to its ability to achieve state-of-the-art performance in various tasks. Deep learning models can automatically extract features from raw data without requiring extensive manual feature engineering.

The potential of deep learning is evident in applications such as autonomous vehicles, where neural networks process vast amounts of sensor data in real-time to make driving decisions. Similarly, in natural language processing, deep learning models have revolutionized machine translation and sentiment analysis by understanding context and nuances in language. As deep learning continues to evolve, we can expect even more groundbreaking advancements across diverse fields.

Integrating Neural Networks with Other Technologies

The integration of neural networks with other technologies amplifies their capabilities and opens new avenues for innovation. For instance, combining neural networks with Internet of Things (IoT) devices allows for real-time data analysis and decision-making at the edge. This integration enables smarter cities, where traffic patterns can be optimized based on real-time data from connected vehicles.

Moreover, integrating neural networks with cloud computing provides scalable resources for training large models efficiently. Cloud platforms offer powerful computing capabilities that facilitate experimentation with complex architectures without the need for extensive on-premises infrastructure. This synergy between technologies fosters a collaborative ecosystem that accelerates advancements in AI.

Ethical Considerations in Neural Network Implementation

As we harness the power of neural networks, ethical considerations must remain at the forefront of our efforts. Issues such as bias in training data can lead to discriminatory outcomes in decision-making processes. It is crucial to ensure that datasets are representative and diverse to mitigate these risks effectively.

Additionally, transparency in how neural networks operate is essential for building trust among users and stakeholders. Organizations must prioritize explainability in their models to ensure that decisions made by AI systems can be understood and justified. By addressing these ethical considerations proactively, we can foster responsible AI development that benefits society as a whole.

Future Trends in Neural Network Development

Looking ahead, several trends are poised to shape the future of neural network development. One significant trend is the increasing focus on efficiency and sustainability in AI models. As concerns about energy consumption grow, researchers are exploring ways to create more efficient architectures that require less computational power without sacrificing performance.

Another trend is the rise of federated learning—a decentralized approach where models are trained across multiple devices while keeping data localized. This method enhances privacy and security while enabling collaborative learning across organizations without sharing sensitive information. As we continue to explore the potential of neural networks, we must remain vigilant about ethical implications and strive for transparency in our approaches.

The future holds immense promise for this technology as it evolves alongside our understanding of intelligence itself—both human and artificial.

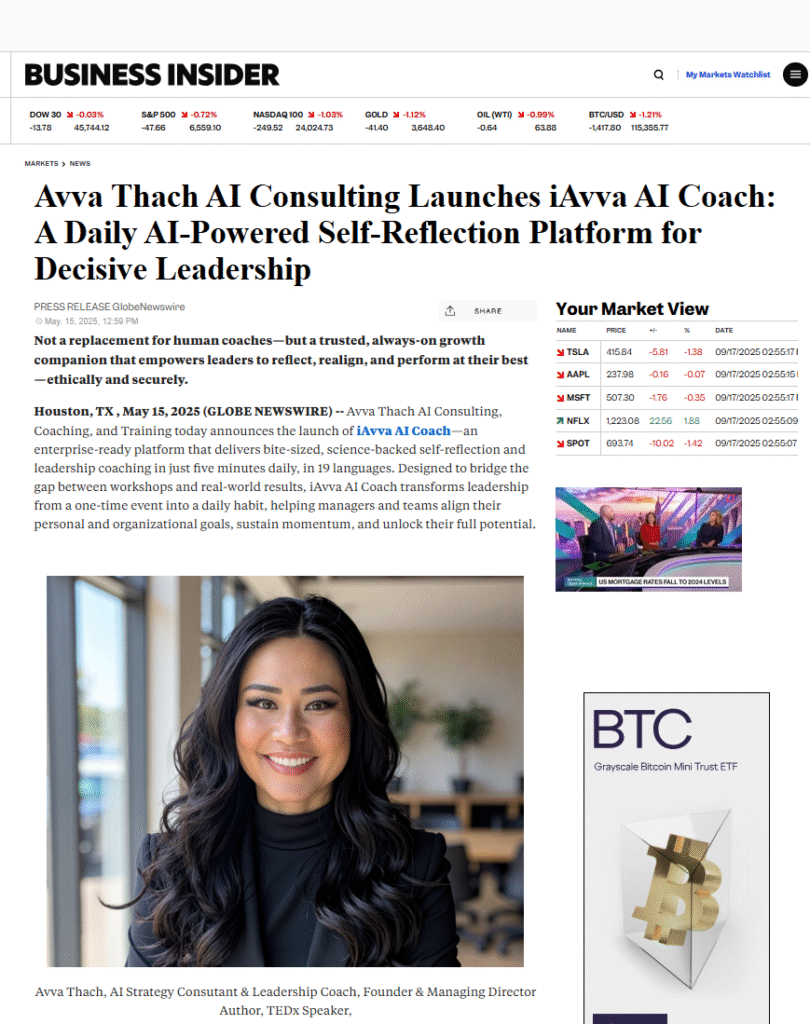

Neural networks are a fundamental component of many artificial intelligence applications, and understanding their impact on leadership development can be insightful. For a deeper exploration of how AI is influencing leadership coaching, you can read the article on Executive AI Coaching: How Artificial Intelligence is Shaping Leadership Development. This article delves into the transformative role of AI in enhancing leadership skills and coaching methodologies.

FAQs

What is a neural network?

A neural network is a computational model inspired by the structure and function of the human brain. It consists of layers of interconnected nodes, or neurons, that process data and recognize patterns to perform tasks such as classification, regression, and decision-making.

How do neural networks work?

Neural networks work by passing input data through multiple layers of neurons. Each neuron applies a mathematical function to the input and passes the result to the next layer. During training, the network adjusts the weights of connections to minimize the difference between predicted and actual outputs.

What are the main types of neural networks?

Common types of neural networks include feedforward neural networks, convolutional neural networks (CNNs), recurrent neural networks (RNNs), and deep neural networks (DNNs). Each type is suited for different tasks such as image recognition, sequence prediction, or natural language processing.

What is the difference between shallow and deep neural networks?

Shallow neural networks have one or two hidden layers, while deep neural networks have multiple hidden layers. Deep networks can model more complex patterns and representations, making them more effective for tasks like image and speech recognition.

What are activation functions in neural networks?

Activation functions introduce non-linearity into the neural network, allowing it to learn complex patterns. Common activation functions include sigmoid, tanh, and ReLU (Rectified Linear Unit).

What is backpropagation?

Backpropagation is a training algorithm used to update the weights of a neural network. It calculates the gradient of the loss function with respect to each weight by propagating the error backward through the network, enabling the network to learn from mistakes.

What are common applications of neural networks?

Neural networks are widely used in image and speech recognition, natural language processing, autonomous vehicles, medical diagnosis, financial forecasting, and many other fields requiring pattern recognition and predictive analytics.

What are the challenges of using neural networks?

Challenges include the need for large amounts of labeled data, high computational resources, risk of overfitting, difficulty in interpreting the model’s decisions, and tuning hyperparameters for optimal performance.

How do neural networks differ from traditional machine learning models?

Neural networks can automatically learn feature representations from raw data, whereas traditional machine learning models often require manual feature engineering. Neural networks are also better suited for handling large, complex datasets.

What is the role of training data in neural networks?

Training data is essential for teaching the neural network to recognize patterns and make accurate predictions. The quality and quantity of training data directly impact the network’s performance and generalization ability.

Leave a Reply