As artificial intelligence (AI) continues to evolve at an unprecedented pace, the need for ethical innovation in AI policy has never been more critical. The rapid integration of AI technologies into various sectors raises profound questions about the implications of these advancements on society. Ethical innovation is not merely a regulatory requirement; it is a strategic imperative that can shape the trajectory of AI development and its societal impact.

By embedding ethical considerations into the fabric of AI policy, organizations can foster trust, enhance user acceptance, and ultimately drive sustainable growth. Moreover, ethical innovation serves as a guiding principle for responsible AI deployment. It encourages stakeholders to consider the broader implications of their technological advancements, ensuring that AI systems are designed with human values at their core.

This approach not only mitigates risks associated with bias, discrimination, and privacy violations but also promotes a culture of accountability and transparency. As we navigate the complexities of AI integration, prioritizing ethical innovation will be essential for building a future where technology serves humanity rather than undermines it. For the latest tech gadgets, Visit iAvva Store today.

Key Takeaways

- Ethical innovation is crucial for responsible AI policy that benefits society.

- AI’s societal impact requires careful balancing of innovation with ethical concerns.

- Transparency, accountability, and fairness are key principles in AI governance.

- Government collaboration with industry and academia strengthens AI policy development.

- Protecting privacy and addressing bias are essential for trustworthy AI systems.

Understanding the Impact of AI on Society

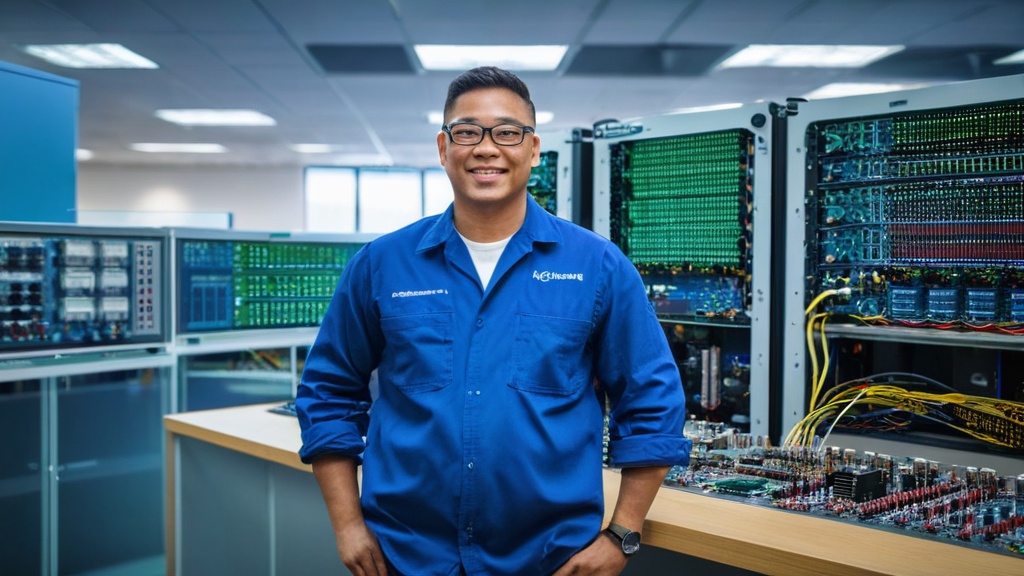

The impact of AI on society is multifaceted, influencing everything from economic structures to social interactions.

For instance, in manufacturing, AI-driven automation can streamline processes, reduce costs, and improve product quality.

However, these advancements come with challenges that must be addressed to ensure equitable benefits for all. On the other hand, the societal implications of AI are not solely positive.

The displacement of jobs due to automation raises concerns about economic inequality and workforce displacement. Additionally, the pervasive use of AI in decision-making processes can lead to unintended consequences if not carefully managed. For example, biased algorithms can perpetuate existing inequalities, while lack of transparency can erode public trust in institutions.

Understanding these dualities is crucial for developing policies that harness the benefits of AI while mitigating its risks.

Balancing Innovation and Ethical Considerations in AI Policy

Striking a balance between innovation and ethical considerations in AI policy is a complex yet essential endeavor. Policymakers must recognize that while fostering innovation is vital for economic growth and competitiveness, it should not come at the expense of ethical standards. This balance requires a nuanced approach that encourages technological advancement while safeguarding fundamental human rights and societal values.

To achieve this equilibrium, organizations must engage in proactive dialogue with stakeholders across various sectors. This includes not only technologists and business leaders but also ethicists, sociologists, and representatives from marginalized communities. By incorporating diverse perspectives into the policy-making process, organizations can develop frameworks that promote responsible innovation.

This collaborative approach ensures that ethical considerations are woven into the fabric of AI development from the outset, rather than being an afterthought.

Key Principles for Ethical AI Policy

Establishing key principles for ethical AI policy is essential for guiding the development and deployment of AI technologies. These principles should encompass fairness, accountability, transparency, and inclusivity. Fairness ensures that AI systems do not perpetuate biases or discrimination against any group; accountability mandates that organizations take responsibility for the outcomes of their AI systems; transparency requires that stakeholders understand how decisions are made; and inclusivity emphasizes the importance of diverse voices in shaping AI technologies.

Furthermore, these principles should be adaptable to the evolving landscape of AI technologies. As new challenges emerge, policymakers must be willing to revisit and revise these principles to ensure they remain relevant and effective. By grounding AI policy in these foundational principles, organizations can create a framework that not only promotes innovation but also protects individual rights and fosters public trust.

The Role of Government in Shaping AI Policy

| Metric | Description | Current Status | Target/Goal | Measurement Frequency |

|---|---|---|---|---|

| AI Regulation Adoption Rate | Percentage of countries with formal AI policies or regulations | 45% | 75% by 2025 | Annual |

| AI Ethics Framework Implementation | Number of organizations adopting AI ethics guidelines | 1200 organizations | 2000 organizations by 2026 | Biannual |

| AI Safety Incident Reports | Number of reported AI-related safety incidents | 35 incidents in 2023 | Reduce by 50% by 2027 | Quarterly |

| Public Awareness of AI Policy | Percentage of population aware of AI policy issues | 38% | 60% by 2025 | Annual |

| Investment in AI Policy Research | Amount of funding allocated to AI policy research (in millions) | 150 million | 300 million by 2026 | Annual |

| AI Workforce Training Programs | Number of training programs focused on AI policy and ethics | 85 programs | 150 programs by 2025 | Annual |

Governments play a pivotal role in shaping AI policy by establishing regulatory frameworks that guide the development and deployment of AI technologies. This role extends beyond mere regulation; governments must also act as facilitators of innovation by creating an environment conducive to responsible AI development. This involves investing in research and development, supporting public-private partnerships, and fostering collaboration between various stakeholders.

Moreover, governments have a responsibility to ensure that their policies reflect the values and needs of their constituents. Engaging with citizens through public consultations and participatory processes can help policymakers understand the concerns and aspirations of the public regarding AI technologies. By incorporating these insights into policy decisions, governments can create frameworks that not only promote innovation but also address societal challenges associated with AI.

Collaboration between Government, Industry, and Academia

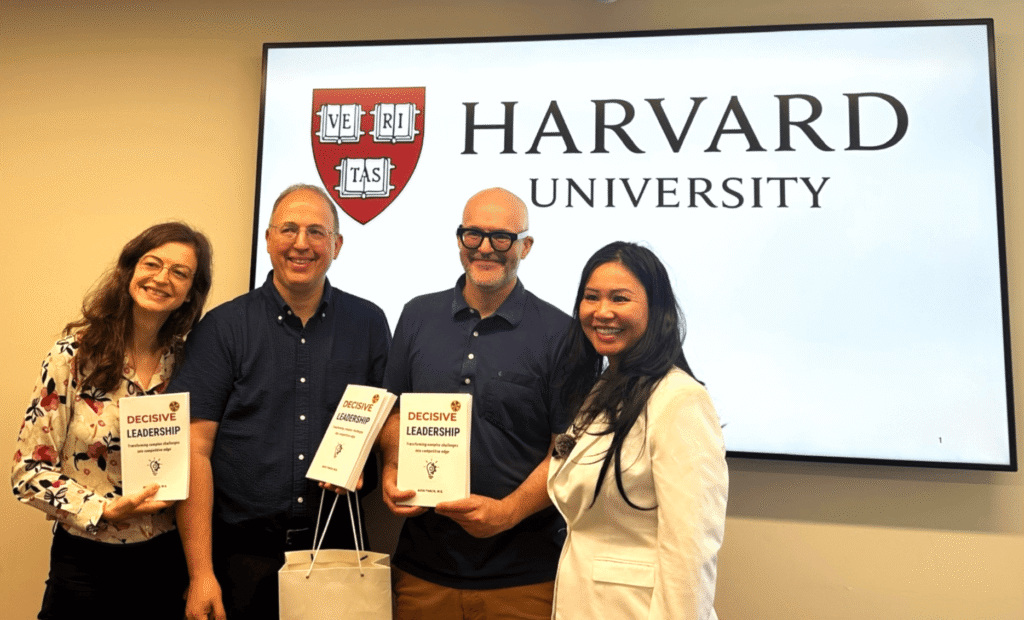

Collaboration between government, industry, and academia is essential for developing effective AI policies that address ethical considerations while promoting innovation. Each sector brings unique perspectives and expertise to the table, creating a holistic approach to AI governance. Governments can provide regulatory oversight and funding for research initiatives; industry can offer insights into practical applications and market dynamics; and academia can contribute rigorous research and ethical frameworks.

This collaborative model fosters an ecosystem where knowledge sharing and innovation thrive. For instance, joint initiatives between universities and tech companies can lead to the development of ethical guidelines for AI deployment in real-world scenarios. Additionally, government-sponsored research grants can incentivize interdisciplinary studies that explore the societal implications of AI technologies.

By working together, these stakeholders can create a robust framework for responsible AI development that benefits society as a whole.

Addressing Bias and Fairness in AI Algorithms

One of the most pressing challenges in AI policy is addressing bias and fairness in algorithms. As AI systems increasingly influence decision-making processes across various domains—such as hiring, lending, and law enforcement—the potential for bias to perpetuate existing inequalities becomes a significant concern. Algorithms trained on historical data may inadvertently learn and replicate biases present in that data, leading to discriminatory outcomes.

To combat this issue, organizations must prioritize fairness in their algorithmic design processes. This involves implementing rigorous testing protocols to identify and mitigate biases before deploying AI systems. Additionally, fostering diversity within teams responsible for developing these algorithms can help ensure that multiple perspectives are considered during the design phase.

By actively addressing bias and promoting fairness in AI algorithms, organizations can build systems that serve all members of society equitably.

Ensuring Transparency and Accountability in AI Systems

Transparency and accountability are critical components of ethical AI policy. As AI systems become more complex and autonomous, understanding how they operate becomes increasingly challenging for users and stakeholders alike. Ensuring transparency involves providing clear explanations of how algorithms make decisions, what data they use, and how they are trained.

Accountability goes hand-in-hand with transparency; organizations must be willing to take responsibility for the outcomes produced by their AI systems. This includes establishing mechanisms for redress when individuals are adversely affected by algorithmic decisions. By fostering a culture of transparency and accountability, organizations can build trust with users and stakeholders while promoting responsible AI deployment.

Protecting Privacy and Data Security in AI Policy

As AI systems rely heavily on data to function effectively, protecting privacy and data security is paramount in shaping ethical AI policy. The collection and processing of personal data raise significant concerns about individual privacy rights and data protection regulations. Organizations must navigate these complexities by implementing robust data governance frameworks that prioritize user consent, data minimization, and secure storage practices.

Moreover, policymakers must establish clear guidelines regarding data usage in AI applications to ensure compliance with privacy regulations such as GDPR or CCPBy prioritizing privacy protection within their AI policies, organizations can mitigate risks associated with data breaches while fostering user trust in their technologies.

Considerations for AI Policy in Healthcare and Education

The implications of AI policy extend significantly into sectors such as healthcare and education, where ethical considerations are particularly pronounced. In healthcare, the use of AI technologies has the potential to revolutionize patient care through improved diagnostics and personalized treatment plans. However, this also raises questions about data privacy, informed consent, and equitable access to care.

Similarly, in education, AI-driven tools can enhance learning experiences by providing personalized feedback and support to students. Yet concerns about algorithmic bias in educational assessments or tracking student performance must be addressed to ensure fairness across diverse populations. Policymakers must consider these sector-specific challenges when developing comprehensive AI policies that prioritize ethical considerations while promoting innovation.

The Future of AI Policy: Anticipating and Addressing Ethical Challenges

As we look toward the future of AI policy, it is essential to anticipate emerging ethical challenges associated with technological advancements. The rapid pace of innovation means that policymakers must remain agile and responsive to new developments while ensuring that ethical considerations remain at the forefront of decision-making processes. This proactive approach involves continuous engagement with stakeholders across sectors to identify potential risks associated with emerging technologies such as autonomous systems or deep learning algorithms.

By fostering an environment where ethical considerations are integrated into every stage of technology development—from ideation to deployment—organizations can navigate the complexities of the evolving landscape while promoting responsible innovation. In conclusion, the journey toward ethical innovation in AI policy requires collaboration among government, industry, academia, and civil society. By prioritizing key principles such as fairness, accountability, transparency, and inclusivity while addressing challenges related to bias, privacy protection, and sector-specific considerations—stakeholders can shape a future where technology serves humanity responsibly and equitably.

In the rapidly evolving landscape of artificial intelligence, understanding the implications of AI policy is crucial for businesses and organizations. A related article that delves into the transformative potential of AI in corporate training is titled “Maximizing Corporate Training with LMS & AI Consulting.” This piece explores how integrating AI into learning management systems can enhance training effectiveness and drive organizational growth. You can read more about it [here](https://iavva.ai/ai-transformation/maximizing-corporate-training-with-lms-ai-consulting/).

FAQs

What is AI policy?

AI policy refers to the set of guidelines, regulations, and frameworks developed by governments, organizations, and institutions to govern the development, deployment, and use of artificial intelligence technologies. It aims to ensure that AI is used ethically, safely, and responsibly.

Why is AI policy important?

AI policy is important because it helps address ethical concerns, privacy issues, security risks, and potential biases associated with AI systems. It also promotes transparency, accountability, and fairness in AI applications, ensuring that the technology benefits society as a whole.

Who creates AI policies?

AI policies are typically created by governments, international organizations, regulatory bodies, industry groups, and academic institutions. Collaboration among these stakeholders is often necessary to develop comprehensive and effective AI governance frameworks.

What are common challenges in AI policy development?

Common challenges include balancing innovation with regulation, addressing ethical dilemmas, managing data privacy, preventing algorithmic bias, ensuring transparency, and creating policies that can adapt to rapidly evolving AI technologies.

How do AI policies impact businesses?

AI policies can affect how businesses develop and deploy AI solutions by setting compliance requirements, data handling standards, and ethical guidelines. Adhering to these policies can help businesses avoid legal risks and build trust with customers and stakeholders.

Are there international AI policy standards?

While there is no single global standard, various international organizations such as the OECD, UNESCO, and the European Union have proposed guidelines and principles to harmonize AI policy approaches across countries.

What ethical principles are commonly included in AI policies?

Common ethical principles include fairness, transparency, accountability, privacy protection, safety, and the promotion of human rights. These principles guide the responsible development and use of AI technologies.

How can individuals stay informed about AI policy developments?

Individuals can stay informed by following updates from government agencies, international organizations, academic research, industry reports, and reputable news sources focused on technology and policy.

Leave a Reply