As artificial intelligence (AI) continues to permeate various sectors, the urgency for comprehensive regulation becomes increasingly apparent. The rapid advancement of AI technologies has outpaced the development of legal frameworks, leading to a landscape where ethical dilemmas and potential risks are often left unaddressed. The need for regulation is not merely a response to the challenges posed by AI; it is a proactive measure to ensure that these technologies are harnessed responsibly and effectively.

Without a robust regulatory framework, we risk creating systems that could exacerbate existing inequalities, infringe on privacy rights, and undermine public trust in technology. Moreover, the global nature of AI development complicates the regulatory landscape. Different countries have varying approaches to data protection, privacy, and ethical standards, which can lead to inconsistencies and confusion.

A unified regulatory approach is essential to create a level playing field for businesses while safeguarding the interests of consumers and society at large. By establishing clear guidelines and standards, we can foster innovation while ensuring that AI technologies are developed and deployed in a manner that aligns with societal values and ethical principles. For the latest tech gadgets, Visit iAvva Store today.

Key Takeaways

- AI regulation is essential to address ethical, safety, and accountability concerns.

- Transparency and bias mitigation are critical for fair AI decision-making.

- Collaboration with governments and regulators supports effective AI governance.

- Integrating ethics into AI design ensures responsible innovation.

- Continuous education and monitoring help maintain compliance with evolving AI laws.

The Importance of Ethical Considerations in AI Implementation

Ethical considerations are paramount in the implementation of AI systems. As organizations increasingly rely on AI to make decisions that affect people’s lives—ranging from hiring practices to healthcare diagnostics—the ethical implications of these technologies cannot be overlooked. The integration of ethical frameworks into AI development is essential to mitigate risks and ensure that these systems operate in a manner that is fair, just, and beneficial to all stakeholders involved.

This requires a shift in mindset from viewing AI as merely a tool for efficiency to recognizing it as a powerful entity that can shape societal norms and values. Furthermore, ethical considerations extend beyond compliance with regulations; they encompass the broader impact of AI on society. Organizations must engage in thoughtful deliberation about the potential consequences of their AI systems, including how they may reinforce or challenge existing power dynamics.

By prioritizing ethical considerations, businesses can build trust with their customers and stakeholders, ultimately leading to more sustainable and responsible AI practices. This commitment to ethics not only enhances corporate reputation but also positions organizations as leaders in the responsible use of technology.

Ensuring Transparency in AI Algorithms

Transparency in AI algorithms is crucial for fostering trust and accountability. As AI systems become more complex, understanding how they arrive at decisions becomes increasingly challenging. This opacity can lead to skepticism among users and stakeholders, particularly when decisions have significant implications for individuals’ lives.

To address this issue, organizations must prioritize transparency by providing clear explanations of how their algorithms function and the data they utilize. This not only demystifies the technology but also empowers users to make informed decisions about their interactions with AI systems. Moreover, transparency is essential for identifying and rectifying potential biases within AI algorithms.

When organizations openly share their methodologies and decision-making processes, it becomes easier to scrutinize their systems for fairness and equity. This collaborative approach encourages stakeholders to engage in constructive dialogue about the ethical implications of AI technologies, ultimately leading to more responsible practices. By committing to transparency, organizations can enhance their credibility and foster a culture of accountability that resonates with consumers and regulators alike.

Addressing Bias and Discrimination in AI Systems

Bias and discrimination in AI systems pose significant challenges that must be addressed proactively. These issues often arise from the data used to train algorithms, which may reflect historical inequalities or societal prejudices. As a result, AI systems can inadvertently perpetuate these biases, leading to unfair outcomes for marginalized groups.

To combat this problem, organizations must adopt rigorous data governance practices that prioritize diversity and inclusivity in their training datasets. By ensuring that data accurately represents the populations affected by their algorithms, organizations can mitigate the risk of biased outcomes. Additionally, addressing bias requires ongoing monitoring and evaluation of AI systems throughout their lifecycle.

Organizations should implement mechanisms for auditing their algorithms regularly to identify and rectify any discriminatory patterns that may emerge over time. This commitment to continuous improvement not only enhances the fairness of AI systems but also demonstrates an organization’s dedication to ethical practices.

Establishing Accountability for AI Decision-Making

| Region | Regulatory Framework | Key Focus Areas | Implementation Status | Notable Legislation |

|---|---|---|---|---|

| European Union | AI Act (Proposed) | Risk-based classification, transparency, accountability, data governance | Draft stage, expected enforcement 2024-2025 | Artificial Intelligence Act |

| United States | Sector-specific guidelines, voluntary frameworks | Privacy, bias mitigation, innovation encouragement | Ongoing development, no comprehensive federal AI law | Algorithmic Accountability Act (proposed) |

| China | Guidelines and standards for AI ethics and security | Data security, ethical use, social stability | Partially implemented, evolving rapidly | New Generation AI Development Plan |

| United Kingdom | AI Strategy and regulatory sandbox | Innovation, safety, transparency | Active, with ongoing consultations | National AI Strategy |

| Canada | Directive on Automated Decision-Making | Transparency, accountability, fairness | Implemented for federal agencies | Directive on Automated Decision-Making |

Establishing accountability for AI decision-making is essential for ensuring responsible use of these technologies. As AI systems increasingly take on roles traditionally held by humans—such as making hiring decisions or determining creditworthiness—questions arise about who is responsible when these systems produce erroneous or harmful outcomes. Organizations must clearly define accountability structures that delineate roles and responsibilities related to AI decision-making processes.

This includes identifying individuals or teams responsible for overseeing algorithm development, deployment, and monitoring. Moreover, accountability extends beyond internal structures; it also involves engaging with external stakeholders, including regulators and consumers. Organizations should be prepared to explain their decision-making processes and provide recourse for individuals adversely affected by AI-driven decisions.

By fostering a culture of accountability, organizations can enhance public trust in their technologies while ensuring that they remain committed to ethical practices. This proactive approach not only mitigates risks but also positions organizations as leaders in responsible AI deployment.

Balancing Innovation with Safety in AI Development

The pursuit of innovation in AI development must be balanced with considerations of safety and risk management. While the potential benefits of AI are immense—ranging from increased efficiency to enhanced decision-making—organizations must remain vigilant about the potential risks associated with these technologies. Striking this balance requires a comprehensive understanding of both the capabilities and limitations of AI systems.

Organizations should adopt a risk-based approach to innovation that prioritizes safety without stifling creativity or progress. To achieve this balance, organizations can implement frameworks that guide the development of AI technologies while ensuring safety measures are integrated from the outset. This includes conducting thorough risk assessments during the design phase, establishing protocols for testing and validation, and engaging in ongoing monitoring once systems are deployed.

By embedding safety considerations into the innovation process, organizations can foster an environment where creativity thrives alongside responsible practices.

Collaborating with Regulatory Agencies and Governments

Collaboration with regulatory agencies and governments is essential for developing effective frameworks for AI regulation. As technology evolves rapidly, regulators often find themselves playing catch-up, struggling to keep pace with advancements in AI capabilities.

Furthermore, collaboration fosters a shared understanding of the challenges posed by AI technologies across sectors. By working together, organizations and regulators can identify best practices, share knowledge, and develop guidelines that promote responsible use of AI while safeguarding public interests. This collaborative approach not only enhances regulatory effectiveness but also positions organizations as active participants in shaping the future of technology governance.

Integrating Ethical Principles into AI Design and Development

Integrating ethical principles into the design and development of AI systems is crucial for fostering responsible innovation. Organizations should establish clear ethical guidelines that inform every stage of the development process—from ideation to deployment. This includes considering the potential societal impact of their technologies, engaging diverse perspectives during design discussions, and prioritizing user-centric approaches that prioritize human welfare.

Moreover, organizations should invest in training programs that equip their teams with the knowledge and skills necessary to navigate ethical dilemmas in AI development. By fostering a culture of ethical awareness within their organizations, leaders can empower employees to make informed decisions that align with organizational values while addressing potential risks associated with AI technologies.

Educating Stakeholders on AI Regulation and Ethics

Education plays a pivotal role in fostering understanding around AI regulation and ethics among stakeholders. As technology continues to evolve rapidly, it is essential for executives, employees, consumers, and regulators alike to stay informed about emerging trends, challenges, and best practices related to AI governance. Organizations should prioritize educational initiatives that promote awareness of ethical considerations in AI implementation while providing resources for navigating regulatory frameworks.

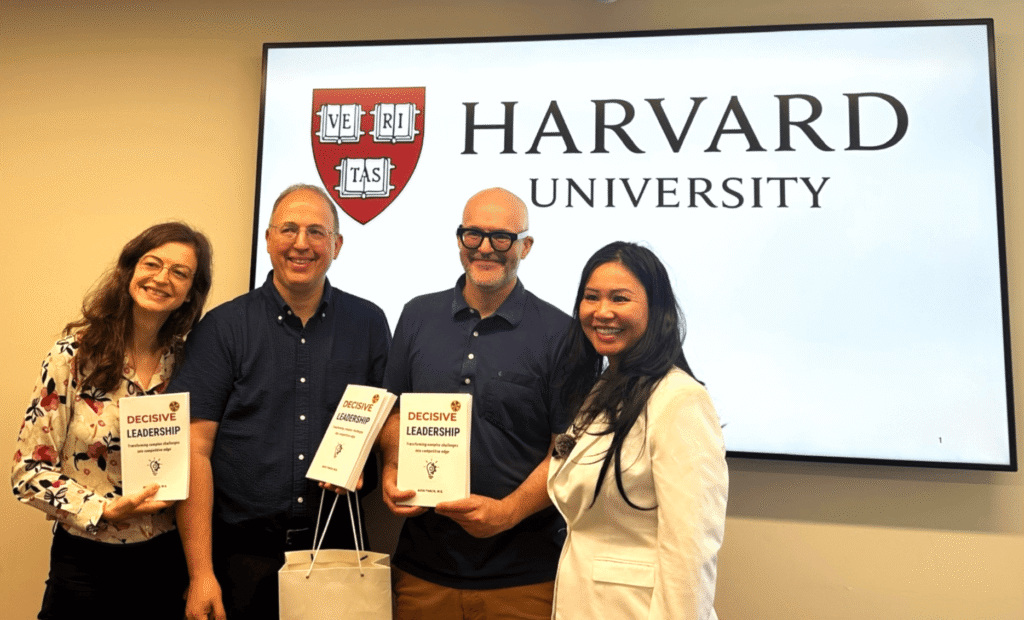

Additionally, fostering open dialogue among stakeholders can facilitate knowledge sharing and collaboration on ethical issues related to AI technologies. By creating forums for discussion—such as workshops or industry conferences—organizations can encourage diverse perspectives while promoting collective learning about responsible practices in AI development.

Monitoring and Evaluating AI Systems for Compliance

Monitoring and evaluating AI systems for compliance with established regulations is essential for ensuring responsible use of these technologies. Organizations should implement robust monitoring mechanisms that assess algorithm performance against predefined standards while identifying potential areas for improvement. Regular evaluations not only help organizations maintain compliance but also provide valuable insights into how their systems are functioning in real-world scenarios.

Moreover, organizations should establish feedback loops that allow users to report concerns or issues related to AI decision-making processes. By actively soliciting input from stakeholders, organizations can enhance their understanding of how their systems impact users while demonstrating a commitment to transparency and accountability.

Adapting to Evolving Regulatory Frameworks for AI

The regulatory landscape surrounding AI is continually evolving as new challenges emerge and societal expectations shift. Organizations must remain agile in adapting to these changes while proactively engaging with regulatory developments that may impact their operations. This requires a commitment to ongoing education about emerging regulations as well as an openness to revising internal policies and practices accordingly.

By fostering a culture of adaptability within their organizations, leaders can ensure that their teams are equipped to navigate evolving regulatory frameworks effectively. This proactive approach not only mitigates risks associated with non-compliance but also positions organizations as leaders in responsible innovation within the rapidly changing landscape of artificial intelligence. In conclusion, as we navigate the complexities of artificial intelligence regulation, it is imperative for organizations to prioritize ethical considerations while fostering transparency, accountability, and collaboration with stakeholders.

By integrating these principles into every stage of AI development—from design through deployment—organizations can harness the transformative potential of technology while safeguarding public interests and promoting responsible practices within the industry.

As the landscape of artificial intelligence continues to evolve, the need for effective AI regulation becomes increasingly critical. Executives must not only understand the technological advancements but also the ethical implications and regulatory frameworks that govern AI usage. For insights on the essential skills required for modern executives in this AI-driven era, you can read more in the article on Leading in the AI Era: Essential Skills for Modern Executives.

FAQs

What is AI regulation?

AI regulation refers to the development and implementation of laws, guidelines, and policies designed to govern the use, development, and deployment of artificial intelligence technologies. The goal is to ensure AI systems are safe, ethical, transparent, and respect privacy and human rights.

Why is AI regulation important?

AI regulation is important to prevent misuse, bias, and harm caused by AI systems. It helps protect individuals’ rights, promotes fairness, ensures accountability, and fosters public trust in AI technologies.

Who is responsible for creating AI regulations?

AI regulations are typically created by governments, regulatory bodies, international organizations, and sometimes industry groups. Collaboration among these stakeholders is common to address the global and cross-sectoral nature of AI.

What are some common areas covered by AI regulations?

Common areas include data privacy, algorithmic transparency, accountability, bias mitigation, safety standards, ethical use, and the impact of AI on employment and society.

Are there any existing AI regulations?

Yes, several regions have introduced or proposed AI regulations. For example, the European Union has proposed the AI Act, which aims to regulate high-risk AI applications. Other countries are also developing frameworks to address AI governance.

How do AI regulations affect businesses?

AI regulations may require businesses to conduct risk assessments, ensure transparency, maintain data protection standards, and implement measures to prevent bias and discrimination. Compliance can involve additional costs but also helps build consumer trust.

What challenges exist in regulating AI?

Challenges include the rapid pace of AI development, the complexity of AI systems, balancing innovation with safety, defining accountability, and creating regulations that are adaptable to future technologies.

Can AI regulation stifle innovation?

While overly restrictive regulations might limit innovation, well-designed regulations aim to create a safe and trustworthy environment that encourages responsible innovation and long-term benefits.

How can individuals stay informed about AI regulations?

Individuals can follow updates from government agencies, international organizations, industry groups, and reputable news sources that cover technology policy and AI developments.

What is the future outlook for AI regulation?

AI regulation is expected to evolve continuously as technology advances. Greater international cooperation and the development of standardized frameworks are likely to play key roles in shaping future AI governance.

Leave a Reply