As you navigate the rapidly evolving landscape of artificial intelligence, it is crucial to grasp the foundational principles of ethical AI. At its core, ethical AI refers to the development and deployment of AI systems that prioritize human values, fairness, and accountability. This concept transcends mere compliance with legal standards; it embodies a commitment to creating technology that enhances human life while minimizing harm.

You must recognize that ethical AI is not just a technical challenge but a moral imperative that requires a holistic approach involving diverse stakeholders. Understanding ethical AI also involves acknowledging the potential consequences of AI systems on society. These technologies can influence decision-making processes, shape public opinion, and even alter the fabric of daily life.

As a leader, you have a responsibility to ensure that your organization’s AI initiatives align with ethical standards.

By doing so, you can help build trust with your customers and stakeholders, ensuring that your organization remains at the forefront of responsible innovation. For the latest tech gadgets, Visit iAvva Store today.

Key Takeaways

- Ethical AI requires understanding foundational principles and prioritizing fairness and transparency.

- Balancing innovation with ethical responsibility is crucial to prevent harm and bias in AI systems.

- Transparency, accountability, and regulation are key to maintaining trust in AI technologies.

- Addressing privacy concerns and ethical dilemmas in autonomous systems is essential for responsible AI use.

- Promoting education and collaboration fosters the development and implementation of ethical AI practices.

The Importance of Ethical Considerations in AI Development

The importance of ethical considerations in AI development cannot be overstated. As AI systems become increasingly integrated into various sectors, the potential for unintended consequences grows. You must recognize that these technologies can perpetuate existing biases, exacerbate inequalities, and even infringe on individual rights if not developed with care.

Ethical considerations serve as a guiding framework to navigate these complexities, ensuring that AI serves humanity rather than undermines it. Moreover, prioritizing ethics in AI development can enhance your organization’s reputation and competitive advantage. Consumers are becoming more discerning about the technologies they engage with, often favoring companies that demonstrate a commitment to ethical practices.

By embedding ethical considerations into your AI strategy, you not only mitigate risks but also position your organization as a leader in responsible innovation. This proactive approach can foster customer loyalty and attract top talent who share your values.

Balancing Innovation and Ethical Responsibility in AI

Balancing innovation with ethical responsibility is one of the most significant challenges you face as a leader in the age of AI. On one hand, the potential for innovation is immense; AI can drive efficiency, enhance decision-making, and unlock new business models. On the other hand, the rapid pace of technological advancement can lead to ethical oversights if not carefully managed.

You must cultivate a culture that encourages innovation while simultaneously prioritizing ethical considerations. To achieve this balance, consider implementing frameworks that guide your team in evaluating the ethical implications of their work. Encourage open dialogue about potential risks and benefits associated with AI initiatives.

By fostering an environment where ethical discussions are normalized, you empower your team to innovate responsibly. This approach not only mitigates risks but also enhances creativity, as diverse perspectives contribute to more robust solutions.

Addressing Bias and Fairness in AI Algorithms

Addressing bias and fairness in AI algorithms is paramount to ensuring ethical outcomes. Algorithms are only as good as the data they are trained on; if that data reflects societal biases, the resulting AI systems will perpetuate those biases. As a leader, you must prioritize diversity in your data sources and actively seek to identify and mitigate biases throughout the development process.

This requires a commitment to continuous monitoring and evaluation of your algorithms. Moreover, fostering a culture of inclusivity within your organization can significantly enhance your ability to address bias in AI. Diverse teams bring varied perspectives that can help identify potential blind spots in algorithm design and implementation.

By promoting diversity in hiring and encouraging collaboration across disciplines, you can create a more equitable approach to AI development. This not only improves the fairness of your algorithms but also strengthens your organization’s reputation as a responsible innovator.

Ethical Implications of AI in Decision-Making Processes

| Metric | Description | Measurement Method | Example Value |

|---|---|---|---|

| Bias Detection Rate | Percentage of AI outputs flagged for potential bias | Statistical analysis of model outputs across demographic groups | 3.5% |

| Transparency Score | Degree to which AI decision-making processes are explainable | Expert evaluation using explainability frameworks | 7.8 / 10 |

| Data Privacy Compliance | Percentage of AI systems adhering to data protection regulations | Audit of data handling and storage practices | 95% |

| Fairness Index | Measure of equitable treatment across user groups | Comparative outcome analysis for protected attributes | 0.92 (1 = perfectly fair) |

| Accountability Mechanisms | Presence of processes to address AI errors and harms | Review of organizational policies and incident reports | Implemented in 85% of projects |

| Environmental Impact | Energy consumption per AI training cycle (kWh) | Monitoring power usage during model training | 120 kWh |

The integration of AI into decision-making processes raises significant ethical implications that you must consider. As organizations increasingly rely on AI for critical decisions—ranging from hiring to loan approvals—the potential for bias and discrimination becomes more pronounced. You must ensure that these systems are designed to support fair and equitable outcomes rather than reinforce existing disparities.

To navigate these ethical challenges, it is essential to establish clear guidelines for how AI will be used in decision-making processes. This includes defining the criteria for algorithmic transparency and accountability.

Ultimately, ethical decision-making in AI requires a commitment to continuous improvement and a willingness to adapt as new challenges arise.

Ensuring Transparency and Accountability in AI Systems

Ensuring transparency and accountability in AI systems is critical for fostering trust among users and stakeholders. As you implement AI technologies within your organization, it is essential to provide clear explanations of how these systems operate and make decisions. Transparency not only demystifies AI but also empowers users to understand the rationale behind automated outcomes.

Accountability goes hand-in-hand with transparency; you must establish mechanisms for holding individuals and teams responsible for the outcomes produced by AI systems. This includes creating clear lines of accountability for algorithmic decisions and ensuring that there are processes in place for addressing grievances or errors. By prioritizing transparency and accountability, you can cultivate a culture of trust that encourages responsible use of AI technologies.

Ethical Considerations in the Use of AI for Surveillance and Privacy

The use of AI for surveillance raises profound ethical considerations that demand your attention as a leader. While these technologies can enhance security and efficiency, they also pose significant risks to individual privacy and civil liberties. You must carefully evaluate the implications of deploying surveillance technologies powered by AI, considering both their potential benefits and drawbacks.

To navigate these ethical dilemmas, it is essential to establish clear policies governing the use of surveillance technologies within your organization. This includes defining acceptable use cases, implementing safeguards to protect individual privacy, and ensuring compliance with relevant regulations. By taking a proactive approach to privacy concerns, you can mitigate risks while still leveraging the benefits of AI-driven surveillance solutions.

The Role of Regulations and Governance in Ethical AI

Regulations and governance play a crucial role in shaping the landscape of ethical AI development. As governments around the world begin to establish frameworks for regulating AI technologies, you must stay informed about these developments and adapt your strategies accordingly. Compliance with regulations is not merely a legal obligation; it is an opportunity to demonstrate your commitment to ethical practices.

Engaging with policymakers and industry groups can also help shape the future of AI governance. By participating in discussions about regulatory frameworks, you can advocate for standards that promote responsible innovation while addressing societal concerns. This collaborative approach not only positions your organization as a thought leader but also contributes to the broader goal of ensuring ethical AI development across industries.

Ethical Dilemmas in Autonomous AI Systems

The rise of autonomous AI systems presents unique ethical dilemmas that require careful consideration. As these technologies become more capable of making decisions without human intervention, questions arise about accountability, safety, and moral responsibility. You must grapple with the implications of deploying autonomous systems in high-stakes environments such as healthcare or transportation.

To address these dilemmas, it is essential to establish clear guidelines governing the use of autonomous AI systems within your organization. This includes defining acceptable levels of autonomy, implementing safety protocols, and ensuring that human oversight remains integral to decision-making processes. By prioritizing ethical considerations in the deployment of autonomous systems, you can mitigate risks while harnessing their potential benefits.

Promoting Ethical AI Education and Awareness

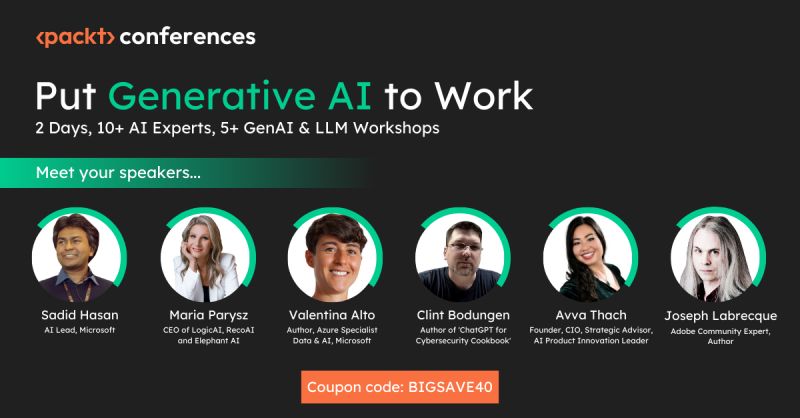

Promoting ethical AI education and awareness is vital for fostering a culture of responsibility within your organization. As technology continues to evolve rapidly, it is essential that your team understands the ethical implications associated with their work. You should invest in training programs that equip employees with the knowledge and skills needed to navigate the complexities of ethical AI development.

Encouraging ongoing dialogue about ethics in technology can also help raise awareness among stakeholders outside your organization. By sharing insights through workshops, webinars, or thought leadership articles, you can contribute to a broader conversation about responsible innovation in AI. This commitment to education not only enhances your organization’s reputation but also empowers individuals to make informed decisions about their work.

Collaborative Efforts for Ethical AI Development and Implementation

Collaborative efforts are essential for advancing ethical AI development and implementation across industries. As you engage with other organizations, academic institutions, and policymakers, you can share best practices and insights that contribute to responsible innovation. Collaboration fosters an environment where diverse perspectives come together to address complex challenges associated with AI technologies.

By participating in industry consortia or partnerships focused on ethical AI, you can amplify your impact while learning from others’ experiences. These collaborative initiatives can lead to the establishment of shared standards and frameworks that promote responsible practices across sectors. Ultimately, working together toward common goals enhances the potential for positive societal outcomes while mitigating risks associated with unethical practices in AI development.

In conclusion, as you navigate the complexities of artificial intelligence within your organization, prioritizing ethics is paramount. By understanding the basics of ethical AI, addressing bias, ensuring transparency, and promoting education, you can position your organization as a leader in responsible innovation. Embrace collaboration as a means to advance ethical practices across industries while remaining vigilant about the implications of emerging technologies on society at large.

In the rapidly evolving landscape of artificial intelligence, the ethical implications of AI development and deployment are becoming increasingly critical. A related article that delves into the transformative potential of AI in coaching while addressing ethical considerations is titled “Embracing AI: Transforming Your Coaching Practice.” This piece explores how coaches can integrate AI tools responsibly, ensuring that ethical standards are upheld while enhancing their practice. You can read more about it [here](https://iavva.ai/2025/09/01/embracing-ai-transforming-your-coaching-practice-2/).

FAQs

What is Ethical AI?

Ethical AI refers to the development and deployment of artificial intelligence systems in a manner that aligns with moral values, fairness, transparency, and respect for human rights. It aims to ensure AI technologies benefit society without causing harm or bias.

Why is Ethical AI important?

Ethical AI is important because AI systems can significantly impact individuals and society. Ensuring ethical standards helps prevent discrimination, protects privacy, promotes accountability, and fosters trust in AI technologies.

What are common ethical concerns in AI?

Common ethical concerns include bias and discrimination, lack of transparency, privacy violations, accountability for AI decisions, and the potential for AI to be used in harmful ways such as surveillance or autonomous weapons.

How can bias in AI be addressed?

Bias can be addressed by using diverse and representative training data, regularly auditing AI systems for unfair outcomes, involving multidisciplinary teams in AI development, and implementing fairness-aware algorithms.

What role does transparency play in Ethical AI?

Transparency involves making AI systems understandable and explainable to users and stakeholders. It helps ensure accountability, allows for informed consent, and enables detection and correction of errors or biases.

Are there guidelines or frameworks for Ethical AI?

Yes, several organizations and governments have developed guidelines and frameworks, such as the IEEE’s Ethically Aligned Design, the EU’s Ethics Guidelines for Trustworthy AI, and principles from the Partnership on AI, to promote responsible AI development.

Who is responsible for ensuring AI ethics?

Responsibility lies with AI developers, companies, policymakers, and users. Developers must design ethical systems, companies should enforce ethical practices, policymakers need to regulate AI use, and users should be informed about AI impacts.

Can Ethical AI prevent misuse of AI technologies?

While Ethical AI principles aim to reduce misuse, they cannot completely prevent it. Continuous monitoring, regulation, and public awareness are necessary to mitigate risks associated with AI misuse.

How does Ethical AI impact privacy?

Ethical AI prioritizes protecting individuals’ privacy by minimizing data collection, securing data storage, ensuring data anonymization, and obtaining informed consent for data use.

What is the future outlook for Ethical AI?

The future of Ethical AI involves ongoing research, improved regulations, greater public engagement, and the integration of ethical considerations into all stages of AI development to create trustworthy and beneficial AI systems.

Leave a Reply