In an era where artificial intelligence (AI) is rapidly transforming industries and reshaping societal norms, the importance of ethical AI cannot be overstated. As organizations increasingly rely on AI to drive decision-making processes, the ethical implications of these technologies become paramount. Ethical AI is not merely a buzzword; it represents a commitment to ensuring that AI systems are designed and implemented in ways that respect human rights, promote fairness, and enhance societal well-being.

The stakes are high, as the consequences of unethical AI can lead to significant harm, including discrimination, privacy violations, and erosion of public trust. Moreover, the ethical considerations surrounding AI extend beyond compliance with regulations. They encompass a broader responsibility to foster innovation that aligns with societal values.

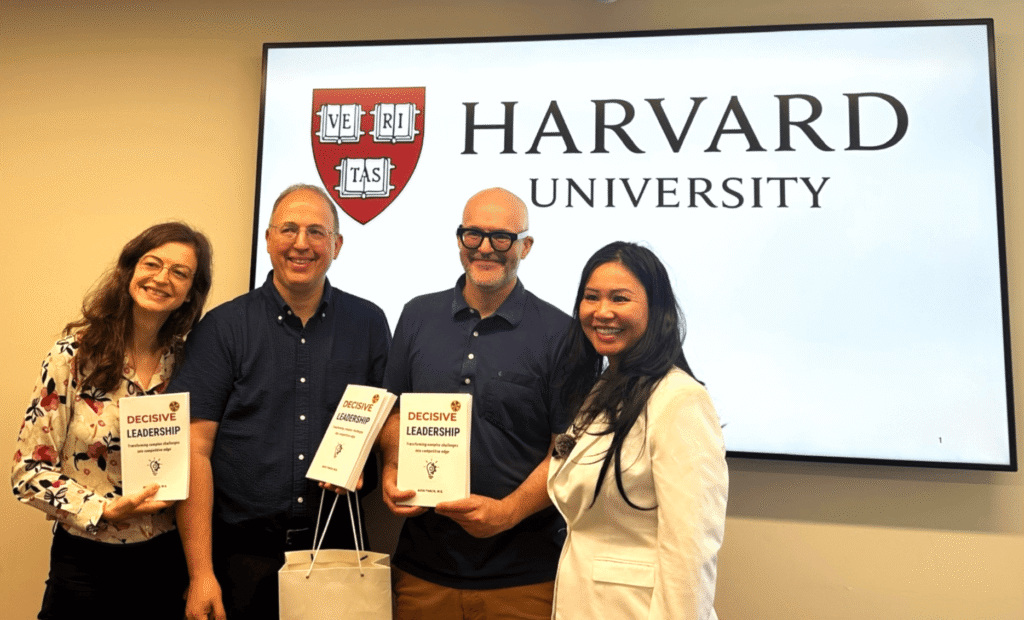

As C-suite executives, it is crucial to recognize that ethical AI can serve as a competitive advantage. Companies that prioritize ethical considerations in their AI strategies are more likely to build lasting relationships with customers and stakeholders, ultimately leading to sustainable growth. By embedding ethical principles into the core of AI initiatives, organizations can navigate the complexities of this technology while contributing positively to society. For the latest tech gadgets, Visit iAvva Store today.

Key Takeaways

- Ethical AI is crucial for ensuring technology benefits society while minimizing harm.

- Transparency, accountability, and fairness are key to building trust in AI systems.

- Addressing bias and protecting privacy are essential components of responsible AI development.

- Collaboration among stakeholders helps establish effective ethical guidelines and standards.

- Public education and ongoing efforts are vital for navigating the future challenges and opportunities of ethical AI.

Understanding the Impact of AI on Society

The impact of AI on society is profound and multifaceted. From healthcare to finance, education to transportation, AI technologies are revolutionizing how we live and work. However, this transformation comes with both opportunities and challenges.

On one hand, AI has the potential to enhance productivity, improve decision-making, and create new economic opportunities. On the other hand, it raises critical questions about job displacement, privacy concerns, and the potential for misuse. As leaders in your respective fields, it is essential to understand that the societal impact of AI is not uniform; it varies across different demographics and communities.

For instance, while some may benefit from increased efficiency and innovation, others may face job losses or increased surveillance. This disparity highlights the need for a nuanced approach to AI deployment that considers the diverse experiences and needs of all stakeholders. By fostering an inclusive dialogue about the societal implications of AI, organizations can better align their strategies with the values and expectations of the communities they serve.

Building Trust through Transparency and Accountability

Trust is a cornerstone of any successful relationship, and this holds true for the relationship between organizations and their stakeholders when it comes to AI. Building trust in AI systems requires a commitment to transparency and accountability. Organizations must be open about how their AI systems operate, including the data sources used, the algorithms employed, and the decision-making processes involved.

This transparency not only demystifies AI for users but also empowers them to make informed choices about their interactions with these technologies. Accountability is equally important in establishing trust. Organizations must take responsibility for the outcomes produced by their AI systems, particularly when those outcomes have significant implications for individuals and communities.

This includes implementing mechanisms for redress in cases where AI systems cause harm or perpetuate bias. By prioritizing transparency and accountability, organizations can foster a culture of trust that encourages collaboration and innovation while mitigating the risks associated with AI deployment.

Incorporating Ethical Principles into AI Development

Incorporating ethical principles into AI development is essential for creating systems that align with societal values. This process begins with defining a clear set of ethical guidelines that inform every stage of the AI lifecycle, from design to deployment. These principles should encompass fairness, accountability, transparency, privacy, and respect for human rights.

By embedding these values into the development process, organizations can ensure that their AI systems are not only effective but also responsible. Moreover, fostering a culture of ethics within organizations requires ongoing education and training for all employees involved in AI development. This includes not only data scientists and engineers but also product managers, marketers, and executives.

By cultivating an ethical mindset across the organization, companies can create a shared understanding of the importance of ethical AI and empower employees to make decisions that reflect these values. This holistic approach to ethics in AI development will ultimately lead to more responsible and impactful technologies.

Addressing Bias and Fairness in AI Algorithms

| Metric | Description | Example Measurement | Importance |

|---|---|---|---|

| Bias Detection Rate | Percentage of AI outputs tested for bias across demographic groups | 85% | High – Ensures fairness and reduces discrimination |

| Transparency Score | Level of clarity in AI decision-making processes | 7/10 | Medium – Builds trust and accountability |

| Data Privacy Compliance | Percentage of AI systems compliant with data protection regulations | 95% | High – Protects user data and privacy rights |

| Explainability Index | Degree to which AI decisions can be explained to users | 8/10 | High – Facilitates understanding and acceptance |

| Human Oversight Ratio | Proportion of AI decisions reviewed by humans | 30% | Medium – Prevents errors and unethical outcomes |

| Ethical Training Hours | Average hours of ethics training provided to AI developers | 20 hours/year | Medium – Promotes responsible AI development |

| Incident Response Time | Average time to address ethical issues in AI systems | 48 hours | High – Minimizes harm from ethical breaches |

One of the most pressing challenges in AI development is addressing bias and ensuring fairness in algorithms. Bias can manifest in various forms—whether through biased training data or flawed algorithmic design—and can lead to discriminatory outcomes that disproportionately affect marginalized groups. As leaders in your organizations, it is crucial to recognize that addressing bias is not just a technical challenge; it is also an ethical imperative.

To combat bias in AI algorithms, organizations must adopt a proactive approach that includes diverse data collection practices, rigorous testing for bias, and continuous monitoring of algorithmic performance. Engaging with diverse stakeholders during the development process can provide valuable insights into potential biases and help create more equitable systems.

By prioritizing fairness in AI development, companies can contribute to a more just society while enhancing their reputation as responsible innovators.

Ensuring Privacy and Data Protection in AI Systems

As AI systems increasingly rely on vast amounts of data to function effectively, ensuring privacy and data protection has become a critical concern. Organizations must navigate complex regulatory landscapes while also addressing public apprehensions about data security and surveillance. Protecting user privacy is not only a legal obligation but also a fundamental aspect of building trust with customers and stakeholders.

To safeguard privacy in AI systems, organizations should implement robust data governance frameworks that prioritize data minimization, consent management, and secure data storage practices. This includes anonymizing personal data whenever possible and ensuring that users have control over their information. Additionally, organizations should be transparent about how data is collected, used, and shared within their AI systems.

By prioritizing privacy and data protection, companies can foster trust among users while mitigating risks associated with data breaches and misuse.

Establishing Ethical Guidelines for AI Use

Establishing ethical guidelines for AI use is essential for promoting responsible practices across industries. These guidelines should serve as a framework for decision-making at all levels of an organization and provide clear expectations for how AI technologies should be deployed.

Moreover, these guidelines should be adaptable to evolving technologies and societal expectations. As AI continues to advance rapidly, organizations must remain vigilant in updating their ethical frameworks to address new challenges and opportunities. Engaging with external experts, industry peers, and advocacy groups can provide valuable insights into best practices for ethical AI use.

By establishing clear ethical guidelines, organizations can navigate the complexities of AI deployment while fostering a culture of responsibility and accountability.

Collaborating with Stakeholders to Create Ethical AI Standards

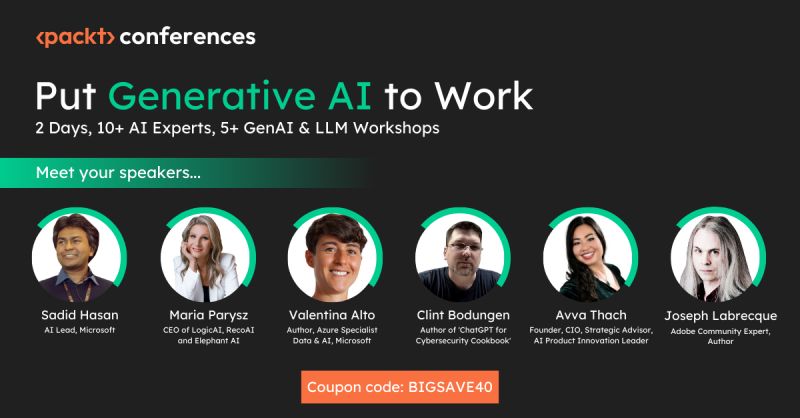

Collaboration among stakeholders is crucial for creating effective ethical standards for AI development and use. This includes engaging with industry peers, regulatory bodies, academic institutions, civil society organizations, and affected communities. By fostering dialogue among diverse stakeholders, organizations can gain valuable perspectives on the ethical implications of AI technologies and work towards developing standards that reflect shared values.

Collaborative efforts can also lead to the establishment of industry-wide best practices that promote responsible AI use across sectors. By participating in multi-stakeholder initiatives focused on ethical AI standards, organizations can contribute to shaping a more equitable landscape for technology deployment while enhancing their credibility as responsible innovators. Ultimately, collaboration is key to addressing the complex challenges posed by AI while ensuring that its benefits are distributed fairly across society.

Implementing Ethical AI in Business and Industry

Implementing ethical AI in business and industry requires a strategic approach that aligns ethical principles with organizational goals. This involves integrating ethical considerations into every aspect of the business model—from product development to marketing strategies—ensuring that ethical practices are embedded within the organizational culture. Leaders must champion these efforts by setting clear expectations for ethical behavior and holding teams accountable for adhering to established guidelines.

Additionally, organizations should invest in training programs that equip employees with the knowledge and skills needed to navigate ethical dilemmas related to AI deployment. This includes fostering critical thinking skills that enable employees to assess potential risks associated with their work while encouraging open discussions about ethical challenges within teams. By prioritizing ethical implementation in business practices, organizations can position themselves as leaders in responsible innovation while building trust with customers and stakeholders.

Educating the Public about Ethical AI and its Benefits

Educating the public about ethical AI is essential for fostering informed discussions about its implications and benefits. As technology continues to evolve rapidly, many individuals may feel overwhelmed or apprehensive about its impact on their lives. Organizations have a responsibility to demystify AI technologies by providing accessible information about how they work, their potential benefits, and the ethical considerations involved.

Public education initiatives can take various forms—ranging from community workshops to online resources—that aim to engage diverse audiences in meaningful conversations about ethical AI. By empowering individuals with knowledge about these technologies, organizations can help build public trust while encouraging responsible use of AI systems. Furthermore, fostering an informed public discourse around ethical AI can lead to greater accountability among developers and policymakers alike.

The Future of Ethical AI: Challenges and Opportunities

The future of ethical AI presents both challenges and opportunities as technology continues to advance at an unprecedented pace. As organizations strive to navigate this evolving landscape, they must remain vigilant in addressing emerging ethical dilemmas while seizing opportunities for innovation that align with societal values. The challenge lies in balancing rapid technological advancements with responsible practices that prioritize human well-being.

However, this landscape also offers significant opportunities for organizations willing to lead by example in promoting ethical AI practices. By embracing transparency, accountability, fairness, privacy protection, collaboration among stakeholders—and prioritizing education—companies can position themselves as pioneers in responsible innovation while contributing positively to society at large. Ultimately, the future of ethical AI will depend on our collective commitment to shaping technologies that enhance human potential rather than diminish it—a vision worth striving towards as we navigate this transformative era together.

In the ongoing discussion about Ethical AI, it’s essential to consider how leadership development can influence the implementation of AI technologies in organizations. A related article that explores this topic is “Leadership Development Coaching Strategies for Executives,” which highlights the importance of ethical considerations in leadership roles when integrating AI into business practices. You can read more about it [here](https://iavva.ai/business/leadership-development-coaching-strategies-executives/).

FAQs

What is Ethical AI?

Ethical AI refers to the development and deployment of artificial intelligence systems in a manner that aligns with moral values, fairness, transparency, and respect for human rights. It aims to ensure AI technologies benefit society without causing harm or bias.

Why is Ethical AI important?

Ethical AI is important because AI systems can significantly impact individuals and society. Ensuring ethical standards helps prevent discrimination, protects privacy, promotes accountability, and fosters trust in AI technologies.

What are the main principles of Ethical AI?

Common principles of Ethical AI include fairness, transparency, accountability, privacy, safety, and inclusivity. These principles guide the design, development, and use of AI systems to ensure they operate responsibly.

How can bias be addressed in AI systems?

Bias in AI can be addressed by using diverse and representative datasets, implementing fairness-aware algorithms, regularly auditing AI models, and involving multidisciplinary teams in the development process to identify and mitigate potential biases.

What role does transparency play in Ethical AI?

Transparency involves making AI systems understandable and explainable to users and stakeholders. It helps build trust, allows for informed decision-making, and enables the detection and correction of errors or biases.

Who is responsible for ensuring AI ethics?

Responsibility for AI ethics lies with developers, organizations, policymakers, and regulators. Collaboration among these groups is essential to establish guidelines, enforce standards, and promote ethical AI practices.

Are there existing guidelines or frameworks for Ethical AI?

Yes, several organizations and governments have developed guidelines and frameworks for Ethical AI, such as the IEEE’s Ethically Aligned Design, the EU’s Ethics Guidelines for Trustworthy AI, and the OECD Principles on AI.

Can Ethical AI prevent misuse of AI technologies?

While Ethical AI frameworks aim to reduce misuse by promoting responsible development and deployment, they cannot entirely prevent malicious use. Ongoing vigilance, regulation, and ethical education are necessary to mitigate risks.

How does Ethical AI impact privacy?

Ethical AI prioritizes the protection of individuals’ privacy by ensuring data is collected, stored, and used responsibly, with consent and security measures in place to prevent unauthorized access or misuse.

What challenges exist in implementing Ethical AI?

Challenges include defining universal ethical standards, addressing cultural differences, managing complex technical issues like bias and explainability, and balancing innovation with regulation.

Leave a Reply