As artificial intelligence (AI) continues to evolve at an unprecedented pace, the need for a robust AI policy framework has never been more critical. The rapid integration of AI technologies into various sectors—from healthcare to finance—has sparked a global conversation about the implications of these advancements. Policymakers, industry leaders, and ethicists are grappling with the challenges posed by AI, including ethical dilemmas, regulatory requirements, and societal impacts.

The conversation is shifting from merely understanding AI’s capabilities to addressing how we can govern its use responsibly and effectively. AI policy encompasses a wide range of considerations, including ethical guidelines, regulatory frameworks, and the roles of various stakeholders in shaping the future of AI. As organizations increasingly rely on AI to drive decision-making and enhance operational efficiency, the need for clear policies that ensure responsible use becomes paramount.

This article will explore the multifaceted landscape of AI policy, examining ethical considerations, regulatory frameworks, and the collaborative efforts required to navigate this complex terrain. For the latest tech gadgets, Visit iAvva Store today.

Key Takeaways

- AI policy must address ethical concerns, including bias, fairness, transparency, and accountability.

- Effective regulation requires balancing innovation with necessary oversight to protect public interests.

- Governments play a crucial role in shaping AI policy and fostering international collaboration.

- Industry perspectives are vital for creating practical and forward-looking AI regulations.

- The future of AI policy depends on navigating complex ethical and regulatory challenges globally.

The Ethical Considerations of AI

The ethical implications of AI are profound and multifaceted.

Ethical considerations encompass a range of issues, including privacy, consent, accountability, and the potential for bias in AI algorithms.

As organizations increasingly leverage AI for decision-making, it is essential to establish ethical guidelines that prioritize human welfare and dignity. One of the most pressing ethical concerns is the potential for bias in AI systems. Algorithms trained on historical data can inadvertently perpetuate existing inequalities if not carefully monitored and adjusted.

For instance, facial recognition technologies have been shown to exhibit racial and gender biases, leading to significant ethical dilemmas regarding their deployment in law enforcement and surveillance. Addressing these biases requires a commitment to transparency and inclusivity in the development process, ensuring that diverse perspectives are considered in algorithm design.

The Regulatory Landscape of AI

The regulatory landscape surrounding AI is rapidly evolving as governments and international bodies seek to establish frameworks that govern its use. Various countries are at different stages of developing AI regulations, with some leading the charge while others lag behind. The European Union has taken a proactive approach by proposing comprehensive regulations aimed at ensuring that AI technologies are safe and respect fundamental rights.

These regulations emphasize risk-based assessments, requiring organizations to evaluate the potential impact of their AI systems on individuals and society. In contrast, other regions may adopt a more laissez-faire approach, prioritizing innovation over regulation. This divergence in regulatory strategies raises questions about how to create a cohesive global framework for AI governance.

As AI technologies transcend national borders, it becomes increasingly important for countries to collaborate on establishing common standards that promote safety, fairness, and accountability while fostering innovation.

Balancing Innovation and Regulation in AI

Striking a balance between fostering innovation and implementing effective regulation is one of the most significant challenges facing policymakers today. On one hand, overly stringent regulations can stifle innovation and hinder the development of groundbreaking technologies that have the potential to transform industries and improve lives. On the other hand, a lack of regulation can lead to harmful consequences, including privacy violations, discrimination, and loss of public trust in AI systems.

To achieve this balance, policymakers must engage with industry stakeholders to understand the implications of proposed regulations fully. Collaborative approaches that involve input from technologists, ethicists, and business leaders can help create regulations that are both effective and conducive to innovation. Additionally, regulatory frameworks should be adaptable to keep pace with the rapid evolution of AI technologies, allowing for iterative improvements based on real-world experiences and outcomes.

The Role of Government in AI Policy

| Metric | Description | Current Value / Status | Source / Region |

|---|---|---|---|

| Number of National AI Strategies | Total countries with official AI policy or strategy documents | 70+ | Global (2024) |

| AI Ethics Guidelines Published | Count of published AI ethics frameworks by governments and organizations | 50+ | Global (2024) |

| AI Regulation Implementation Rate | Percentage of countries actively enforcing AI-related regulations | 35% | Global (2024) |

| Investment in AI Governance Research | Annual funding allocated to AI policy and governance research | Increasing trend | Global (2024) |

| Public Awareness of AI Policy | Percentage of population aware of AI policy issues | 40% | OECD Countries (2023) |

| AI Policy Focus Areas | Top priorities in AI policy (e.g., privacy, bias, safety) | Privacy (85%), Bias Mitigation (75%), Safety (70%) | Global (2024) |

| AI Workforce Regulation | Policies addressing AI impact on employment and labor | Emerging in 40% of countries | Global (2024) |

Governments play a crucial role in shaping AI policy by establishing regulatory frameworks, funding research initiatives, and promoting public awareness about the implications of AI technologies. By taking an active role in AI governance, governments can help ensure that these technologies are developed and deployed responsibly. This includes investing in education and training programs that equip the workforce with the skills needed to thrive in an AI-driven economy.

Moreover, governments must also act as facilitators of collaboration between various stakeholders, including academia, industry, and civil society. By fostering partnerships among these groups, governments can create an ecosystem that encourages innovation while addressing ethical concerns. This collaborative approach can lead to more comprehensive policies that reflect diverse perspectives and prioritize public interest.

International Collaboration in AI Regulation

As AI technologies continue to advance globally, international collaboration becomes essential for effective regulation. The borderless nature of AI means that decisions made in one country can have far-reaching implications for others. Therefore, establishing international standards for AI governance is critical to ensuring consistency and fairness across jurisdictions.

Organizations such as the OECD and UNESCO have begun to facilitate discussions on global AI governance frameworks. These initiatives aim to bring together governments, industry leaders, and civil society organizations to develop shared principles for responsible AI use. By fostering international dialogue and cooperation, stakeholders can work towards creating a cohesive regulatory environment that promotes innovation while safeguarding human rights.

Industry Perspectives on AI Policy

Industry leaders have a unique perspective on AI policy as they navigate the complexities of integrating these technologies into their operations. Many organizations recognize the importance of responsible AI use and are proactively engaging with policymakers to shape regulations that support innovation while addressing ethical concerns. Industry associations are increasingly advocating for clear guidelines that promote transparency and accountability in AI development.

Moreover, businesses are beginning to understand that ethical considerations are not just regulatory requirements but also essential components of building trust with customers and stakeholders. Companies that prioritize ethical AI practices are likely to gain a competitive advantage as consumers become more discerning about the technologies they engage with. By aligning their practices with ethical standards, organizations can foster a culture of responsibility that resonates with their values and mission.

Addressing Bias and Fairness in AI

Addressing bias and fairness in AI is a critical aspect of developing responsible policies. As mentioned earlier, algorithms trained on biased data can perpetuate existing inequalities, leading to harmful outcomes for marginalized communities. To combat this issue, organizations must prioritize fairness in their AI systems by implementing rigorous testing protocols and continuously monitoring performance.

One effective approach is to adopt diverse datasets that reflect a wide range of perspectives and experiences. By ensuring that training data is representative of different demographics, organizations can reduce the risk of bias in their algorithms. Additionally, involving diverse teams in the development process can lead to more equitable outcomes by incorporating varied viewpoints into decision-making.

Ensuring Transparency and Accountability in AI

Transparency and accountability are fundamental principles that should underpin any effective AI policy framework. As AI systems become more complex, it is essential for organizations to provide clear explanations of how these technologies operate and make decisions. This transparency fosters trust among users and stakeholders while enabling them to understand the implications of AI deployment.

Accountability mechanisms must also be established to ensure that organizations take responsibility for their AI systems’ outcomes. This includes implementing processes for auditing algorithms and addressing any negative consequences that may arise from their use. By prioritizing transparency and accountability, organizations can demonstrate their commitment to ethical practices while mitigating potential risks associated with AI technologies.

The Future of AI Policy

The future of AI policy will likely be shaped by ongoing advancements in technology as well as evolving societal expectations regarding ethical considerations. As public awareness of AI’s implications grows, there will be increasing pressure on organizations and governments to prioritize responsible practices. This shift may lead to more comprehensive regulations that address emerging challenges while fostering innovation.

Moreover, as global collaboration becomes more critical in shaping AI governance frameworks, we may see the emergence of international treaties or agreements focused on responsible AI use. These agreements could establish common standards for ethical practices while promoting cross-border cooperation in research and development efforts.

Navigating the Ethical and Regulatory Landscape of AI

Navigating the ethical and regulatory landscape of artificial intelligence presents both challenges and opportunities for organizations across sectors. As we move forward into an era defined by rapid technological advancements, it is essential for stakeholders—governments, industry leaders, ethicists—to engage in meaningful dialogue about how best to govern these powerful tools responsibly. By prioritizing ethical considerations alongside regulatory frameworks, we can create an environment where innovation thrives while safeguarding human rights and societal values.

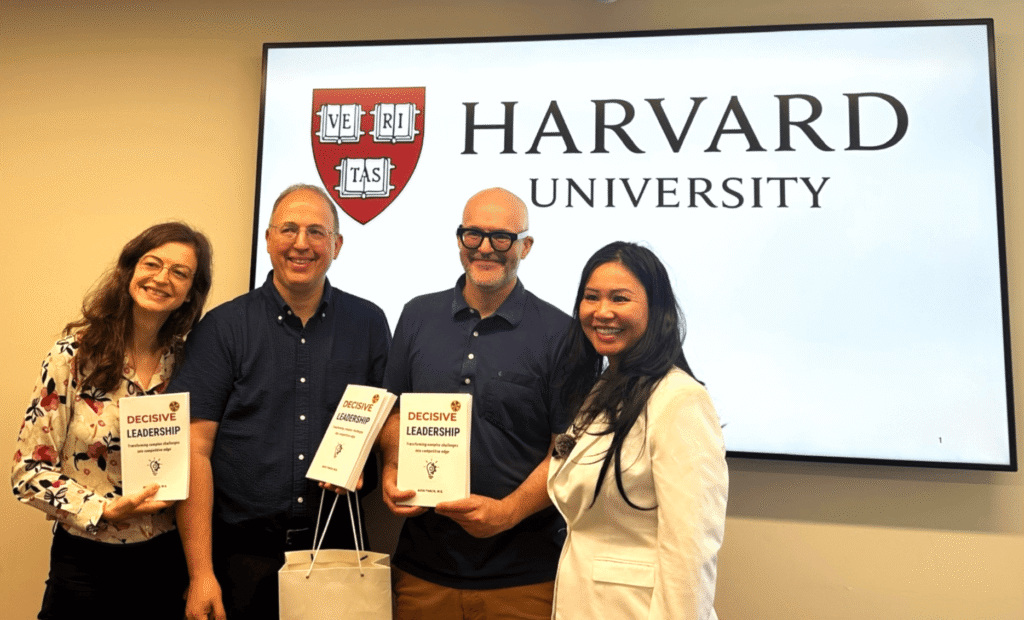

In the rapidly evolving landscape of artificial intelligence, understanding the implications of AI policy is crucial for businesses aiming to thrive. A related article that delves into the challenges and strategies of AI implementation is titled “Scaling in the AI Era: Leadership Lessons from OpenAI’s $115B Cash Burn.” This piece offers valuable insights into the financial and operational aspects of AI transformation, making it a must-read for leaders navigating this complex terrain. You can read the article [here](https://iavva.ai/ai-transformation/scaling-in-the-ai-era-leadership-lessons-from-openais-115b-cash-burn/).

FAQs

What is AI policy?

AI policy refers to the set of guidelines, regulations, and frameworks developed by governments, organizations, and institutions to govern the development, deployment, and use of artificial intelligence technologies. It aims to ensure that AI is used ethically, safely, and responsibly.

Why is AI policy important?

AI policy is important because it helps address ethical concerns, privacy issues, security risks, and potential biases associated with AI systems. It also promotes innovation while protecting public interests and ensuring accountability in AI applications.

Who creates AI policies?

AI policies are typically created by government bodies, international organizations, industry groups, academic institutions, and sometimes private companies. Collaboration among these stakeholders is common to develop comprehensive and effective policies.

What are common goals of AI policy?

Common goals include ensuring transparency, fairness, and accountability in AI systems; protecting user privacy; promoting safety and security; fostering innovation; and addressing societal impacts such as job displacement and inequality.

How do AI policies impact businesses?

AI policies can affect how businesses develop and deploy AI technologies by imposing compliance requirements, data protection standards, and ethical guidelines. They may also influence investment decisions and market competitiveness.

Are there international AI policy standards?

While there is no single global standard, several international organizations like the OECD, UNESCO, and the European Union have proposed guidelines and frameworks to harmonize AI policies across countries.

What challenges exist in creating AI policies?

Challenges include keeping pace with rapid technological advancements, balancing innovation with regulation, addressing diverse ethical perspectives, ensuring global cooperation, and managing the complexity of AI systems.

How can individuals stay informed about AI policy developments?

Individuals can stay informed by following news from reputable sources, subscribing to updates from government agencies and international organizations, participating in public consultations, and engaging with academic and industry research on AI policy.

Leave a Reply